The preceding acts describe what predictive scoring measures, why it matters, and how reliable it is. This act answers the question that follows: how do you build it?

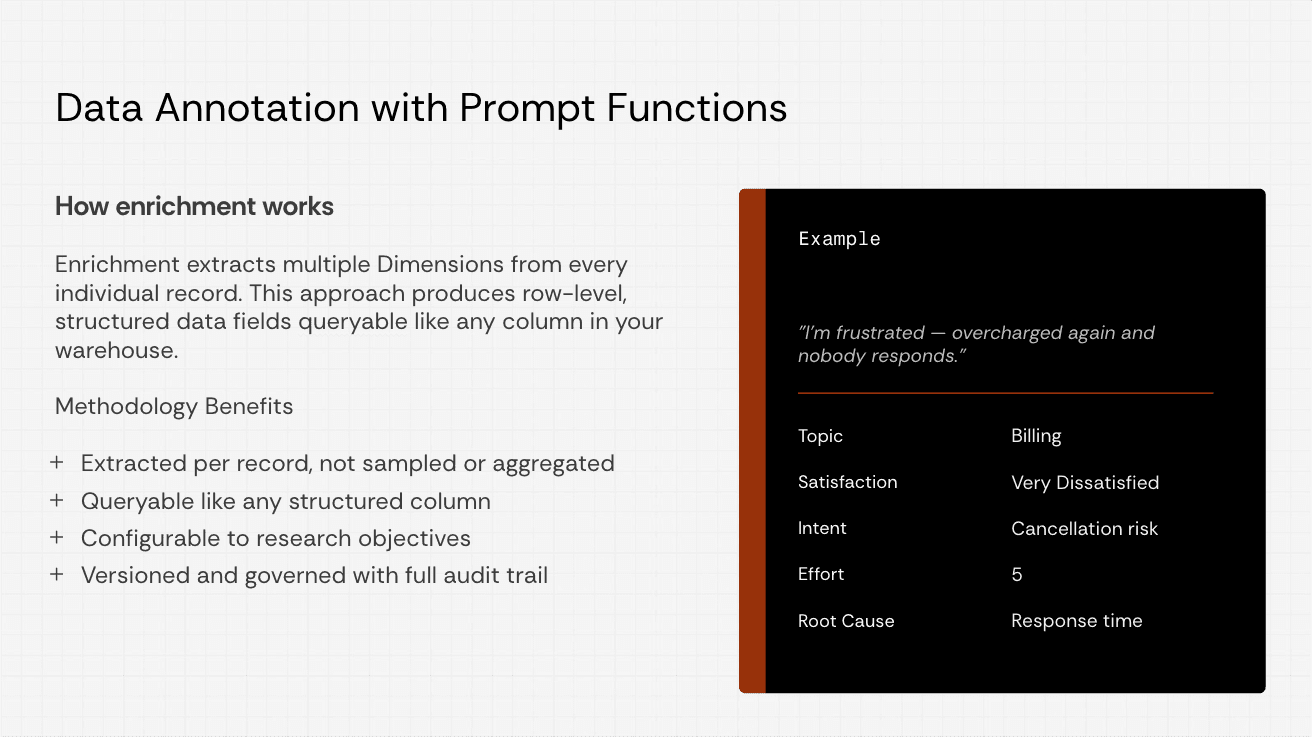

A prompt function is the unit of execution. It is a structured schema — a JSON object — that defines every analytical task the model will perform on a single record. The schema specifies the fields, their types, their descriptions (which serve as the model's instructions), and the order in which they execute. One prompt function, applied to every record in a dataset, produces a table of enriched fields that join with existing metadata.

Everything in this section is designed to be referenced repeatedly. The decision framework tells you what to score. The construction rules tell you how to write effective field descriptions. The quick-start schemas give you a working starting point you can adapt. The validation checklist tells you what to check before trusting the output.

Choosing what to score

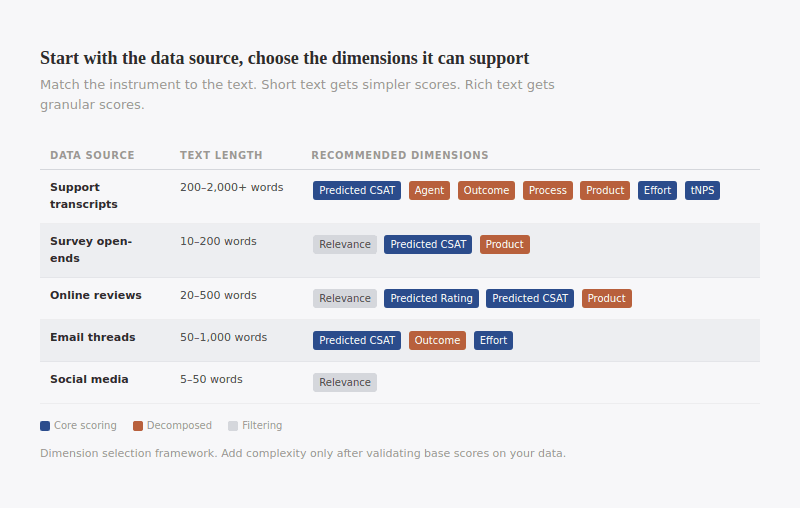

The first decision is not how to build the prompt function. It is which scoring dimensions to include. The answer depends on two factors: how much text your records contain and what analytical questions you need to answer.

Data source | Typical text length | Recommended dimensions | Why |

Support transcripts (call, chat) | 200–2,000+ words | Predicted Satisfaction, Agent Satisfaction, Outcome Satisfaction, Process Satisfaction, Product Satisfaction, Predicted Effort, Transactional NPS | Long text with clear turn-taking supports full decomposition. This is the richest data source for predictive scoring. |

Survey open-ended responses | 10–200 words | Relevance, Predicted Satisfaction, Product Satisfaction | Text is direct but often short. Decomposition is unreliable below ~50 words. Start with composite scores. |

Online reviews | 20–500 words | Relevance, Predicted Rating, Predicted Satisfaction, Product Satisfaction | Reviews are written to express opinion — signal is strong. Agent and effort scores are rarely applicable. |

Email threads | 50–1,000 words | Predicted Satisfaction, Outcome Satisfaction, Predicted Effort | Formal register. Multi-topic threads complicate scoring. Focus on resolution and effort. |

Social media posts | 5–50 words | Relevance only (then Predicted Rating on relevant subset) | Most posts are not experiential. Run relevance scoring first; apply experience scores only to high-relevance records. |

The principle behind this table: match the instrument to the signal density of the text. A three-word review ("Great product, thanks!") supports a composite satisfaction prediction. It does not support four decomposed dimensions. A twenty-minute support transcript supports full decomposition and would waste analytical potential on a single composite. Short text gets simpler scores. Rich text gets granular scores. This is not a quality hierarchy — it is a signal density hierarchy.

Start with fewer dimensions and add complexity as you validate. A prompt function with Relevance + Predicted Satisfaction + Confidence + Evidence is a strong starting point for any data source. Add decomposed dimensions once you have confirmed that the base scores are reliable on your data.

A prompt function with Relevance + Predicted Satisfaction + Confidence + Evidence is a strong starting point for any data source.

Anatomy of a prompt function

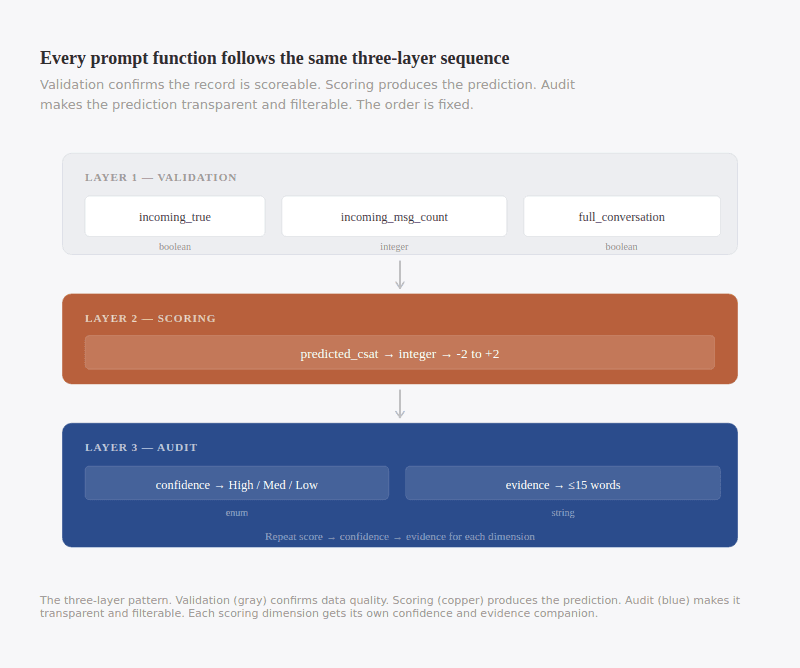

Every prompt function follows a three-layer pattern. The layers execute in order, and the order matters.

Layer 1: Validation

This is analogous to a screener on a survey. A political poll first asks a respondent if they are registered to vote to identify their eligibility to participate in the upcoming election. A consumer research survey asks someone if they have purchased something in a given category to ensure they are speaking to a non-rejector of the category.

These fields are not analytical. They are data quality checks that confirm whether the record is scoreable before the model attempts any prediction. Examples: Does the transcript contain incoming customer messages? Is this a complete conversation or a fragment? How many messages are present? Validation fields prevent the model from scoring records that lack sufficient signal — the programmatic equivalent of null conditions.

Layer 2: Annotation

These are the predictive dimensions: satisfaction, effort, NPS, decomposed scores, topic classifications. Each field has a description that serves as the model's instruction, a type (integer, string, boolean), and — for categorical or ordinal fields — an explicit set of valid values. Scoring fields execute after validation fields, which means the model has already confirmed that the record contains scoreable content before it attempts a prediction.

Scoring

Predefined

Dynamic

Layer 3: Audit

Every scoring field is followed by two companion fields: a confidence score and an evidence field. Together they form an audit layer that makes each prediction transparent, filterable, and spot-checkable. The audit fields execute after the scoring field they accompany, which means the model has already committed to a prediction before it assesses its own certainty.

The three layers always appear in this order. A prompt function that scores before validating, or that omits the audit layer, will produce data that is harder to trust and harder to debug.

LAYER 1 — VALIDATION

incoming_true → boolean (does the record contain customer messages?)

incoming_message_count → integer (how many?)

full_conversation_true → boolean (is this a complete conversation?)

LAYER 2 — SCORING

predicted_csat → integer (the predictive score, -2 to +2)

LAYER 3 — AUDIT

predicted_csat_confidence → enum (High / Medium / Low)

predicted_csat_evidence → string (≤15 words citing the textual basis)

If the prompt function contains multiple scoring dimensions (e.g., predicted satisfaction + agent satisfaction + effort), each scoring field gets its own confidence and evidence companion. The sequence repeats: score → confidence → evidence, score → confidence → evidence. The model processes fields in order, so each prediction inherits context from every field that precedes it.

Order matters

Field name matters

String / Integer / Boolean

Writing effective field descriptions

The description field in the JSON schema is the model's instruction - these are the prompts within the prompt function. Our preferred term for these are dimensions, or dimensions of analysis, because each prompt is effectively a different analytical task extracting information from the textual data.

It is the single most consequential design decision in a prompt function. A well-written description produces reliable, interpretable output. A poorly written one produces scores that look plausible but drift across runs and resist validation.

Behavioral anchors

Do not label scale levels with abstract adjectives. "Poor," "Fair," "Good," "Excellent" are unstable: the model interprets them using priors from its training data, and those priors shift across runs and model versions. Instead, anchor each level to observable evidence the model can identify in the text.

Undirected

Rate satisfaction from 1–5 where:

1 = Very Poor

2 = Poor

3 = Average

4 = Good

5 = Excellent

Directed

Rate satisfaction from -2 to +2 where:

-2 = Very Dissatisfied: explicit frustration, unresolved issue,

language indicating the experience was harmful or damaging

-1 = Somewhat Dissatisfied: expressed disappointment, partial

resolution, lingering concerns

0 = Neither: functional interaction with no meaningful emotional

signal in either direction

+1 = Somewhat Satisfied: positive indicators, issue addressed,

customer acknowledged resolution

+2 = Very Satisfied: explicit gratitude, praise for the experience,

strong positive language, issue fully resolved

The difference: the undefined version asks the model to apply its own definition of "Good." The directed version tells the model what to look for at each level.

Integer scales

Use integers, not continuous values. LLMs classify more reliably than they regress. A model asked to output a score of 3.7 is making a precision claim its architecture does not support. Integer scales (1–5, -2 to +2, 0–10) match the ordinal nature of the construct and produce tighter distributions across runs.

Null conditions

Every scoring field must include an explicit instruction for when the model should refuse to score. Without null conditions, the model defaults to the center of the scale when evidence is ambiguous. Those false neutrals compress variance, inflate the middle of the distribution, and reduce the statistical power of every downstream test.

THE FALSE-NEUTRAL SPIKE

When an LLM encounters a record with insufficient signal, its default behavior is to produce the center of the scale. At scale, these false neutrals compress variance and reduce statistical power. Explicit null conditions convert unscoreable records into missing values that preserve data integrity.

Effective null conditions name specific triggers:

If there is not enough information to assign a score with

confidence, output null value. Specifically:

- The text contains fewer than five words of substantive content

- No experience is described (the text is a question, a

greeting, or an administrative message)

- The record is a repost, forwarded message, or automated

notification with no original content

The null rate in your output is informative. A null rate below 5% on social media data suggests the null conditions are too loose — most social posts should not be scored. A null rate above 50% on support transcripts suggests the null conditions are too strict or the data contains a large fraction of bot-only interactions.

Isolation instructions for decomposed scores

When running multiple satisfaction dimensions on the same record, each dimension must be explicitly instructed to score independently. Without isolation, the model reads the customer's overall emotional state and attributes it uniformly across all dimensions. An agent who performs well during a frustrating product issue gets penalized on agent satisfaction because the model senses overall frustration.

Add isolation language to each decomposed field:

AGENT SATISFACTION

Score the customer's satisfaction with the agent specifically,

independent of the outcome, the product, or the process.

A customer whose issue was not resolved but who was treated

with skill, empathy, and professionalism scores high on this

dimension. Do not let dissatisfaction with other aspects of

the experience influence this score.

The phrase "independent of" and "do not let" are the load-bearing instructions. Without them, decomposition collapses into the composite it was designed to replace.

The audit layer

Every predictive score in a production prompt function should be followed by two companion fields: confidence and evidence. Together they form an audit layer that makes the prediction transparent, filterable, and spot-checkable.

Confidence scoring

The confidence field asks the model to assess how much textual signal supported the score it just assigned. It is not a separate analytical task — it is a companion to the prediction it follows.

CONFIDENCE

Assess confidence in the assigned score based on the strength and clarity of signals in the text.

High — Clear, unambiguous signals through explicit language, tone, repeated indicators.

Medium — Inferable but not explicit, or mixed signals present.

Low — Best guess from limited or ambiguous language.

If the score is null, output null.

The operational value is substantial. Dimension Labs' validation research found that filtering to high-confidence predictions raised within ±1 agreement from 67% to 82% and roughly doubled exact-match accuracy. Build this filter into reporting from the start: high-confidence predictions for executive-facing dashboards, full output for analytical deep-dives, low-confidence records flagged for review.

Evidence fields

The evidence field forces the model to cite its reasoning in a single scannable line:

EVIDENCE

Detail the evidence for the assigned score using fewer than 15 words (all lower case, no punctuation). If the score is null, output null value.

The 15-word constraint is deliberate. It forces compression: "customer thanked agent and confirmed issue resolved" or "customer expressed frustration about repeated billing errors." A human reviewer can spot-check hundreds of predictions per hour by scanning evidence fields alone, without reading full transcripts. When an evidence field contradicts the score — a high satisfaction rating with evidence reading "customer threatened to cancel service" — that is a misclassification the team can catch and use to refine the prompt.

The three-field pattern

The sequence — score, confidence, evidence — is not arbitrary. Each field builds on the previous one: the model makes the prediction, assesses its own certainty, then cites the textual basis. This pattern repeats for every predictive score in the prompt function.

Quick-start schemas

The following schemas are working starting points. Copy, adapt the descriptions to your domain, and iterate based on the output.

Support transcripts (full decomposition)

{

"name": "support_enrichment",

"description": "Analysis of support conversations between agents and customers.",

"context": "Incoming messages are from the customer, outgoing messages are from the support agent.",

"parameters": {

"type": "object",

"properties": {

"incoming_true": {

"description": "True if the transcript contains any incoming customer messages.",

"type": "boolean"

},

"full_conversation_true": {

"description": "True if this transcript contains a full or substantive conversation. False if it appears to be a fragment. If unsure, label True.",

"type": "boolean"

},

"predicted_csat": {

"description": "Overall satisfaction with the support experience, -2 to +2. -2=Very Dissatisfied (explicit frustration, unresolved, harmful). -1=Somewhat Dissatisfied (disappointment, partial resolution). 0=Neither (functional, no emotional signal). +1=Somewhat Satisfied (positive, issue addressed). +2=Very Satisfied (gratitude, praise, fully resolved). Null if insufficient signal.",

"type": "integer"

},

"predicted_csat_confidence": {

"description": "Confidence in predicted_csat. High=clear unambiguous signals. Medium=inferable but mixed. Low=best guess. Null if score is null.",

"type": "string",

"enum": ["High", "Medium", "Low"]

},

"predicted_csat_evidence": {

"description": "Evidence for predicted_csat in <15 words, all lower case, no punctuation. Null if score is null.",

"type": "string"

},

"agent_satisfaction": {

"description": "Satisfaction with the agent specifically, independent of outcome, product, or process. Score 1-10. High=skillful, empathetic, clear communication. Low=dismissive, unclear, unhelpful. Do not let dissatisfaction with other aspects influence this score. Null if no agent interaction or insufficient signal.",

"type": "integer"

},

"agent_satisfaction_confidence": {

"description": "Confidence in agent_satisfaction. High/Medium/Low. Null if score is null.",

"type": "string",

"enum": ["High", "Medium", "Low"]

},

"outcome_satisfaction": {

"description": "Whether the customer's issue was resolved. Score 1-10. High=complete resolution confirmed by customer. Low=unresolved or workaround only. Null if no substantive issue or insufficient signal.",

"type": "integer"

},

"outcome_satisfaction_confidence": {

"description": "Confidence in outcome_satisfaction. High/Medium/Low. Null if score is null.",

"type": "string",

"enum": ["High", "Medium", "Low"]

},

"predicted_effort": {

"description": "How hard the customer had to work. Score 1-5 (5=most effort). Consider: repeat contacts, transfers, re-explaining, process complexity, wait time. Null if insufficient signal.",

"type": "integer"

},

"predicted_effort_confidence": {

"description": "Confidence in predicted_effort. High/Medium/Low. Null if score is null.",

"type": "string",

"enum": ["High", "Medium", "Low"]

}

},

"required": ["incoming_true", "full_conversation_true", "predicted_csat", "predicted_csat_confidence", "predicted_csat_evidence", "agent_satisfaction", "agent_satisfaction_confidence", "outcome_satisfaction", "outcome_satisfaction_confidence", "predicted_effort", "predicted_effort_confidence"]

}

}

Survey open-ends (composite + product)

{

"name": "survey_enrichment",

"description": "Analysis of open-ended survey responses.",

"parameters": {

"type": "object",

"properties": {

"relevance": {

"description": "Score 0-10 for relevance to a firsthand customer experience. 10=clearly experiential. 0=not relevant (generic comment, spam, empty). Lower scores: secondhand, news, jokes, advocacy.",

"type": "integer"

},

"predicted_csat": {

"description": "Overall satisfaction, -2 to +2. -2=Very Dissatisfied. -1=Somewhat Dissatisfied. 0=Neither. +1=Somewhat Satisfied. +2=Very Satisfied. Null if text is fewer than 5 substantive words or relevance < 3.",

"type": "integer"

},

"predicted_csat_confidence": {

"description": "Confidence in predicted_csat. High/Medium/Low. Null if score is null.",

"type": "string",

"enum": ["High", "Medium", "Low"]

},

"predicted_csat_evidence": {

"description": "Evidence for predicted_csat in <15 words, all lower case, no punctuation. Null if score is null.",

"type": "string"

},

"product_satisfaction": {

"description": "Satisfaction with the product or service itself, independent of support or process. Score 1-10. High=product praised, reliability noted, positive comparison. Low=defects, design complaints, regret. Null if no product-relevant content.",

"type": "integer"

},

"product_satisfaction_confidence": {

"description": "Confidence in product_satisfaction. High/Medium/Low. Null if score is null.",

"type": "string",

"enum": ["High", "Medium", "Low"]

}

},

"required": ["relevance", "predicted_csat", "predicted_csat_confidence", "predicted_csat_evidence", "product_satisfaction", "product_satisfaction_confidence"]

}

}

Reviews (composite + relevance)

{

"name": "review_enrichment",

"description": "Analysis of online customer reviews.",

"parameters": {

"type": "object",

"properties": {

"relevance": {

"description": "Score 0-10 for relevance to a firsthand product or service experience. 10=detailed firsthand account. 0=spam, irrelevant, or not a genuine review.",

"type": "integer"

},

"predicted_rating": {

"description": "Estimated overall experience rating, 1-10 (10=best). Use only evidence in the text. Null if relevance < 3 or insufficient signal.",

"type": "integer"

},

"predicted_rating_confidence": {

"description": "Confidence in predicted_rating. High/Medium/Low. Null if score is null.",

"type": "string",

"enum": ["High", "Medium", "Low"]

},

"predicted_rating_evidence": {

"description": "Evidence for predicted_rating in <15 words, all lower case, no punctuation. Null if score is null.",

"type": "string"

}

},

"required": ["relevance", "predicted_rating", "predicted_rating_confidence", "predicted_rating_evidence"]

}

}

Validating your output

The first run on a new dataset will surface problems. Expect to iterate. The following checks should be run before the output is used for any downstream analysis.

Distribution check

Plot the distribution of each scoring field. A healthy distribution for satisfaction scores on support data typically shows a slight positive skew: more records in the +1/+2 range than -1/-2, with a smaller cluster at 0. Warning signs:

• Spike at the center of the scale (e.g., 60%+ of records at 0 on a -2 to +2 scale) — the null conditions are too loose or missing entirely. The model is defaulting to neutral when it lacks evidence.

• No nulls — the null conditions are missing or too strict in their trigger. Every dataset contains records that should not be scored.

• Bimodal distribution (spikes at both extremes, hollow middle) — the behavioral anchors may be too polarized, leaving the model nowhere to put moderate experiences.

Null rate check

The null rate tells you how much of your data the model judged unscorable. Expected ranges by data source:

Data source | Expected null rate | If too low | If too high |

Support transcripts | 5–15% | Null conditions too loose — bot-only and fragment records are being scored | Null conditions too strict — or the data contains many very short exchanges |

Survey open-ends | 10–25% | Short/empty responses are being force-scored | Check minimum word threshold |

Reviews | 5–10% | Spam and irrelevant reviews are passing through | Relevance threshold may be too high |

Social media | 40–70% | Most social posts are not experiential — low null rate means non-experiential content is being scored | Correct if this matches the expected share of non-experiential content |

Confidence distribution

The split between High and Medium (and Low, if present) indicates how much of the dataset contains clear signal. A dataset where 80%+ of predictions are High confidence is strongly scoreable. A dataset where 60%+ are Medium suggests the text is ambiguous and the scores should be consumed with more caution.

If the confidence distribution does not match your expectation of the data quality, the behavioral anchors may not match the language patterns in your data. Adjust the description to use terminology and evidence patterns that appear in your actual records.

Evidence spot-check

Sample 50–100 records and read the evidence fields. Look for:

• Evidence contradicting the score — a predicted satisfaction of +2 with evidence reading "customer said they would never return" is a misclassification. This is the fastest way to find prompt problems.

• Generic evidence — evidence fields that all read "positive interaction" or "negative sentiment" without specificity suggest the model is not finding concrete textual evidence and may be guessing.

• Evidence that references information not in the text — the model should cite only what appears in the record. If evidence fields reference "the customer's history" or "previous interactions," the model is hallucinating context.

Iterate

Expect to revise the prompt function 2–3 times before the output is production-ready. The most common revisions: tightening null conditions (the model is scoring too many unscorable records), adjusting behavioral anchors (the scale levels do not map to the language patterns in the data), and adding isolation instructions (decomposed scores are converging toward the composite).

Design decisions and why they matter

Each design choice in the methodology traces to a specific problem it prevents.

Design choice | Alternative | Why the alternative fails |

Progressive complexity (relevance → rating → CSAT → decomposition) | One-size-fits-all | Short text forced through decomposition produces unreliable scores; rich text limited to a composite wastes signal |

Behavioral anchors at every scale level | Simple labels ("Poor" to "Excellent") | Model interprets labels using unstable priors; variance increases across runs |

Integer scales | Continuous output (e.g., 3.7) | LLMs classify more reliably than they regress; implied precision exceeds actual precision |

Explicit null conditions | Forced scoring on all records | Model defaults to scale center when uncertain; false neutrals compress variance, corrupt aggregates |

Isolation instructions for decomposed scores | Unitary evaluation | Model distributes overall sentiment across all dimensions; decomposition collapses into the composite it was designed to replace |

Confidence + evidence after every score | Score only | No way to filter by reliability; no way to spot-check without reading full transcripts; no way to diagnose prompt problems at scale |

Validation fields before scoring fields | Score everything | Model scores fragments, bot-only transcripts, and empty records; data quality problems enter the warehouse undetected |

Common failure modes

These are the problems that appear most frequently in production deployments. Each is fixable with a targeted change to the prompt function.

The false-neutral spike. Symptom: an abnormally high proportion of records score at the center of the scale (0 on a -2/+2 scale, or 5 on a 1–10 scale). Cause: missing or weak null conditions. The model defaults to center when evidence is insufficient rather than returning null. Fix: add explicit null triggers. Check that the null instruction appears in the field description, not only in the top-level prompt.

Decomposition collapse. Symptom: decomposed dimensions (agent, outcome, process, product) correlate at r > 0.90 with each other and with the composite. The dimensions are not measuring different things. Cause: missing isolation instructions. The model reads overall satisfaction and distributes it across all fields. Fix: add "independent of" and "do not let" language to each decomposed field description.

Confidence inflation. Symptom: 95%+ of records receive High confidence, including records with short or ambiguous text. Cause: the confidence description is too permissive or the model is treating confidence as a formality rather than a genuine assessment. Fix: tighten the confidence criteria. Add specific examples: "High requires at least two explicit signals. A single word ('thanks') is Medium at best."

Evidence hallucination. Symptom: evidence fields reference information not present in the text — customer history, account status, prior interactions. Cause: the model is drawing on pretraining patterns rather than the actual record. Fix: add "cite only evidence that appears explicitly in the text of this record" to the evidence field description.

Score compression. Symptom: the distribution is tighter than expected — scores cluster in a narrow band (e.g., 90% of records score 6–8 on a 1–10 scale) despite varied text. Cause: behavioral anchors are not differentiated enough at adjacent scale levels. The model cannot distinguish between a 6 and a 7 because the descriptions are too similar. Fix: rewrite the anchors to create sharper distinctions between adjacent levels, with concrete examples of what textual evidence maps to each.

Null leakage. Symptom: records that should return null (empty text, bot-only interactions, administrative messages) receive a score anyway. Cause: the null condition in the description is too general ("if insufficient signal, output null") and the model is interpreting "insufficient" liberally. Fix: enumerate the specific conditions that should trigger null. Name them explicitly: "fewer than 5 words," "no customer message present," "automated system message only."