Preface

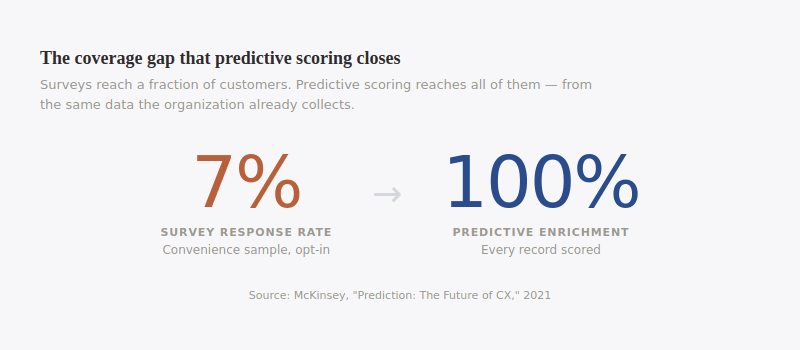

Predictive scoring is the most accessible way to extract insight from unstructured data with LLMs. It produces the same metrics the enterprise already tracks: CSAT, NPS, CES — marrying new techniques with well understood concepts. Using AI these scores are no longer limited by survey response rates as it derives them from every interaction, not from the five percent of customers who answer a survey.

The core strength of generative AI is pattern recognition, a model must first read and understand before it can respond. To respond accurately it must understand perfectly. Our methodology leans into that strength. Scoring offers a strong foundation for establishing reliable, harmonized metrics across every customer channel. Joined with transactional and operational data, those metrics open statistical testing and causal inference to AI agents.

This guide covers the background, the validation evidence, and a step-by-step path for deploying predictive scoring as the first step in developing trusted AI analytics practices for the enterprise.

I. Getting Started with LLMs & Customer Data

Every company generates enormous volumes of language data resulting from customer interactions: chat transcripts, call recordings, email threads, reviews, survey responses, CRM notes. Buried in that language are the reasons people stay, leave, renew, complain, and recommend. Together it is the largest pool of customer data in the enterprise, and most of it has never been analyzed.

A growing number of vendors promise the ability to extract insights from this dataset. However, data teams continue to struggle with how best to operationalize these tools alongside existing data analytics practices, and more broadly, with how to build stakeholder and executive trust in AI-driven analysis.

Here we detail the first steps on a path forward. We discuss methods and describe how LLM annotation can be applied as an extension of well-understood methodologies, as well as how this approach serves as a critical step in making unstructured data agent-ready.

One sentence. Four analytical signals. A human reader parses all four without effort. A data warehouse cannot parse any of them, because the sentence is not a field. |

What is LLM Annotation?

LLM's read language data and execute analytical tasks that add labels. The approach is both simple and powerful because language carries rich signals the enterprise has never been able to use. Consider one line from a support transcript:

"I've called three times and nobody can tell me why my bill doubled."

One sentence. Four analytical signals: dissatisfaction, process failure, churn risk, billing defect. A human reader parses all four without effort. A data warehouse cannot parse any of them, because the sentence is not a field. And text is invisible to every other analytical tool the organization runs. This is a structural problem: the inability to convert language into usable data in tables.

Existing methods fall short.

Keyword rules catch only what you already know to look for, and they misfire often. Manual tagging captures only what an internal team has the hours to read, and two taggers will label the same record differently often enough that the label column is unreliable before it leaves the spreadsheet. Surveys sample the five percent of customers who opt in to self-reporting. Machine learning demands large investments in time and infrastructure for models that cannot adapt without retraining. These methods have been tolerated for decades because nothing else could operate at the intersection of language comprehension and enterprise scale.

Large language models sit at that intersection. An LLM reads every record in a dataset, executes defined analytical tasks, and outputs structured fields (sentiment, scores, topics, themes) as new columns on the same tables the warehouse already holds. A `predicted_csat` column sits next to `session_id`, `customer_id`, and `closed_at`, at the same grain as the interaction itself, and joinable to orders, subscriptions, and product events on the keys the data team already uses.

This is not faster search, and it is not better automated tagging. It is a different kind of infrastructure: unstructured language in, a meaning layer of structured, queryable data out. Every record. Consistency no human team can match.

Where to get started?

Predictive scoring offers a natural on-ramp to enterprise adoption because it aligns with existing concepts. LLMs extract established customer metrics (CSAT, NPS, CES) directly from text, producing wider coverage for metrics the organization already tracks.

Instead of a survey, the score comes from the interaction itself. Instead of a convenience sample, every record is scored. The output is a structured field in the warehouse, joinable with operational and transactional data—and once it joins, the full range of signal within language data becomes available for segmentation, statistical testing, and (ultimately) a key input for agentic analysis and causal inference.

II. Proving the value of predicted scoring

Predictive scoring is the on-ramp to LLM-based enrichment not because it is the most sophisticated application, but because it is the most immediately adoptable one.

Traditional customer metrics are already well understood across the enterprise. A predicted CSAT of 2 means what a survey CSAT of 2 means. The business rules for acting on it (customer recovery, coaching triggers, alerting logic) already exist. Data teams do not need to build new interpretive frameworks. Operations teams do not need to learn a new metric. Executives do not need to be convinced of the value.

Predictive Scoring as Infrastructure:

The underlying value that predictive scoring provides for a data team is not limited to wider coverage of satisfaction scores. It is the structured field it creates.

Every predicted CSAT, transactional NPS score and derived effort rating has a value range and a record identifier. It joins with transaction records, product data, operational metrics, customer tenure, revenue, contract terms.

• What is the relationship between predicted effort and renewal?

• Do customers who interact with a specific feature show different satisfaction profiles?

• Does agent satisfaction correlate with handle time?

These questions have been unanswerable using unstructured data because it could not be analyzed alongside structured data at scale. Now it can. The analytical machinery that has been running on transactional and operational data for years now has customer language as an input.

Enabling Stronger Agentic Analysis:

LLM classification methods used for predictive scoring unlocks a wide range of modalities for agentic analysis. A data pipeline that transforms language data into structured dimensions, what we term a meaning layer, is a prerequisite for leveraging agentic workflows across structured and unstructured data.

Consider an agent that notices predicted outcome satisfaction dropping for a product line, traces it to a policy change two weeks ago, tests significance, and surfaces the finding—that is not a dashboard and it’s not traditional number crunching. It is a process that employs agents that is supported by a more robust omni-channel infrastructure.